Predicting patient outcomes from imaging has moved from hopeful theory to practical reality in many clinics. With better models and bigger sets of images clinicians can get an early read on likely recovery paths and risks.

For teams that pair medical know how with smart algorithms, the goal is to turn pixels into useful signals that drive care. The next sections map out how to get started and what to watch for when you want imaging to inform outcome prediction.

Overview Of AI Driven Outcome Prediction

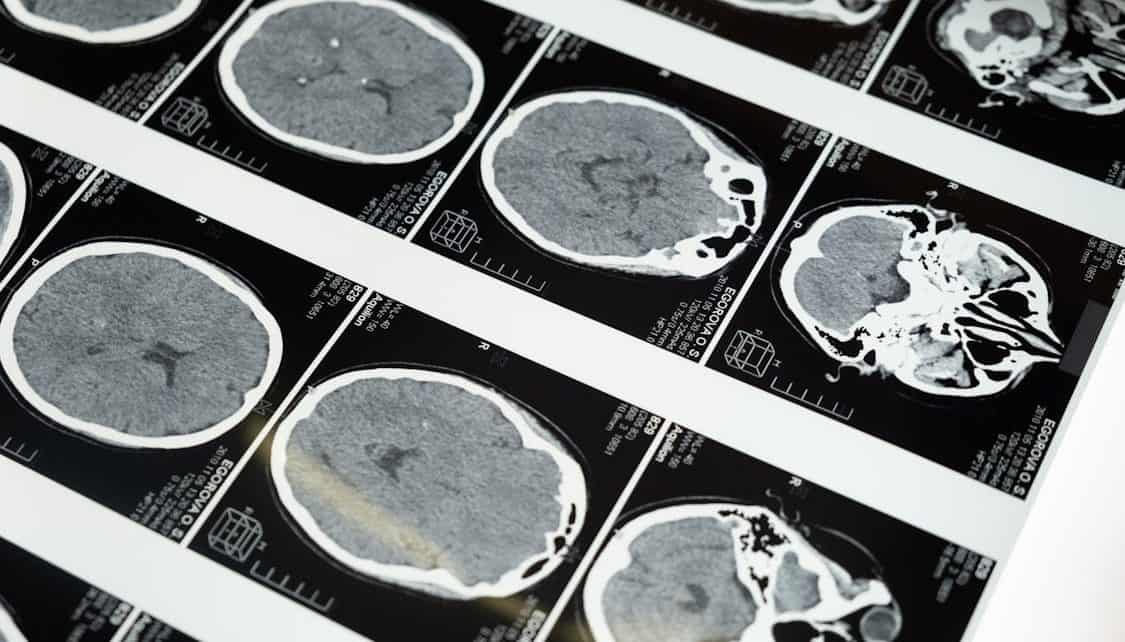

Medical images carry patterns that often go unnoticed by the naked eye yet are useful for forecasting patient trajectories. AI driven models learn statistical links between image features and later events such as recovery speed or complication risk.

When models perform well they offer a probability based view that supports clinical judgment without replacing it. Models work best when trained on clean data sets that reflect the range of real life cases clinicians see.

Data Collection And Management

Good prediction starts with the right data and careful handling of that data over time. Assemble image sets that cover different scanners, protocols, and patient groups so that the model does not get stuck on a narrow view.

Metadata such as demographics outcome dates and treatment details matter because they allow correlation between image change and clinical end points. Store images and labels in a way that makes tracking provenance and updates simple for future retraining.

Labelling And Annotation

Accurate labels are the backbone of supervised prediction work in imaging. Clinical end points must be defined clearly for everyone who marks images so that inter rater variation is reduced and label noise is kept low.

Use structured annotation tools and capture both local findings and global summary notes so the model can learn multiple levels of association. Regular quality checks and an adjudication workflow help keep label drift in check as studies accrue.

Preprocessing And Augmentation

Image preprocessing makes raw scans comparable and reduces signal variance that confuses learning algorithms. Steps like intensity normalization spatial registration and cropping to relevant anatomy help the model focus on meaningful variation.

Augmentation that reflects plausible changes in acquisition or anatomy can boost generalization by exposing the model to variations it might meet in practice. Avoid synthetic transforms that introduce impossible anatomy or artifacts that do not mirror real scanner behavior.

Model Selection And Training

Choosing the right model involves trade offs between complexity training time and interpretability. Start with simpler architectures that are easier to inspect and then scale to deeper networks when data volume grows and training resources are available.

Training needs careful tuning of class balance learning rates and early stopping rules to prevent overfit to a particular subset of cases. Mix image driven inputs with clinical variables when those extra features help prediction rather than muddy the signal.

Validation And Evaluation

Robust evaluation separates lucky fits from models that will hold up with new patients. Use held out test sets and cross validation to measure how well predictions generalize across unseen scans and different patient groups.

Report a range of metrics that capture calibration discrimination and clinical utility rather than relying on a single score. Perform error analysis to find patterns in failures and use those findings to guide more targeted data collection or model changes.

Integration Into Clinical Workflow

Predictions are only useful when they arrive at the right time and in a usable format for care teams. Embed outputs into reporting pipelines or decision support tools so that findings fit into existing clinician tasks rather than adding extra steps.

Early implementations give proof from real outpatient practices that timely integration improves both adoption and confidence among staff.

Provide clear risk estimates and explain common failure modes so staff know how much weight to place on the prediction. Pilot deployments with small user groups let you refine both the presentation and the timing of when predictions are surfaced.

Explainability And Communication

Clinicians need a way to bridge the black box when model outputs influence care choices. Visual explanations that highlight image regions tied to a prediction can help build trust by linking numbers back to familiar anatomy.

Present confidence bounds and scenario based examples to show when the model is likely to be reliable and when caution is advised. Plain language summaries that translate probability into likely outcomes for a particular patient help teams discuss implications with patients and family.

Regulatory And Ethical Issues

Predictive tools in imaging sit at the intersection of safety law and patient rights and must be treated with care. Data governance practices must protect patient privacy while allowing enough access for training and validation that models learn real world variation.

Documentation covering intended use limits training data and known failure modes supports both approvals and good clinical practice. Ongoing monitoring after deployment is necessary to detect model drift or new biases that affect fairness across groups.

Continuous Learning And Maintenance

A model that worked well at launch can lose performance as imaging protocols diagnosis patterns or treatments shift over time. Establish a monitoring plan with automated checks that flag drops in key performance metrics and unusual output distributions.

Create a pipeline for periodic retraining that includes fresh labeled cases and a validation routine that mirrors initial model testing. Treat maintenance as a clinical quality task that receives regular attention from both technical and clinical staff.

Practical Tips For Teams Getting Started

Form a small multidisciplinary group that includes radiology IT data scientists and frontline clinicians to keep work grounded in clinical needs. Start with a narrow clinical question and a modest data set to build confidence and test feasibility before scaling to broader problems.

Use open source tools and community data sets to lower early barriers and accelerate learning while you build internal capacity. Keep channels open for clinician feedback and be ready to adjust both model inputs and output formats in response to real world use.

Preparing For The Future Of Imaging Predictions

As models get better and compute costs fall there will be more opportunities to predict long term outcomes or to personalize follow up schedules. The technical path will require stronger links between image features and mechanistic understanding of disease when the aim is to suggest targeted interventions.

Training loops that incorporate clinician feedback and real world outcomes will make models more resilient and clinically useful. Adoption will follow from steady proof that the tools help clinicians make better choices for individual patients rather than simply adding another score to the chart.